In this article I would like to share a solution to a problem that I came across during my regular work – show how to define a service account for Cloud Functions using Terraform.

Prerequisites

For this to work, the hashicorp/google Terraform provider has to be used. Also, the service account that Terraform uses needs to have appropriate permissions:

- permissions to create resources – that depends on what exactly is in the Terraform code

resourcemanager.projects.getIamPolicy+resourcemanager.projects.setIamPolicyfor setting project-level policiessecretmanager.secrets.getIamPolicy+secretmanager.secrets.setIamPolicyto usegoogle_secret_manager_secret_iam_member- Potentially other permissions that end with

*.*.setIamPolicyfor resource-level policies.

Context

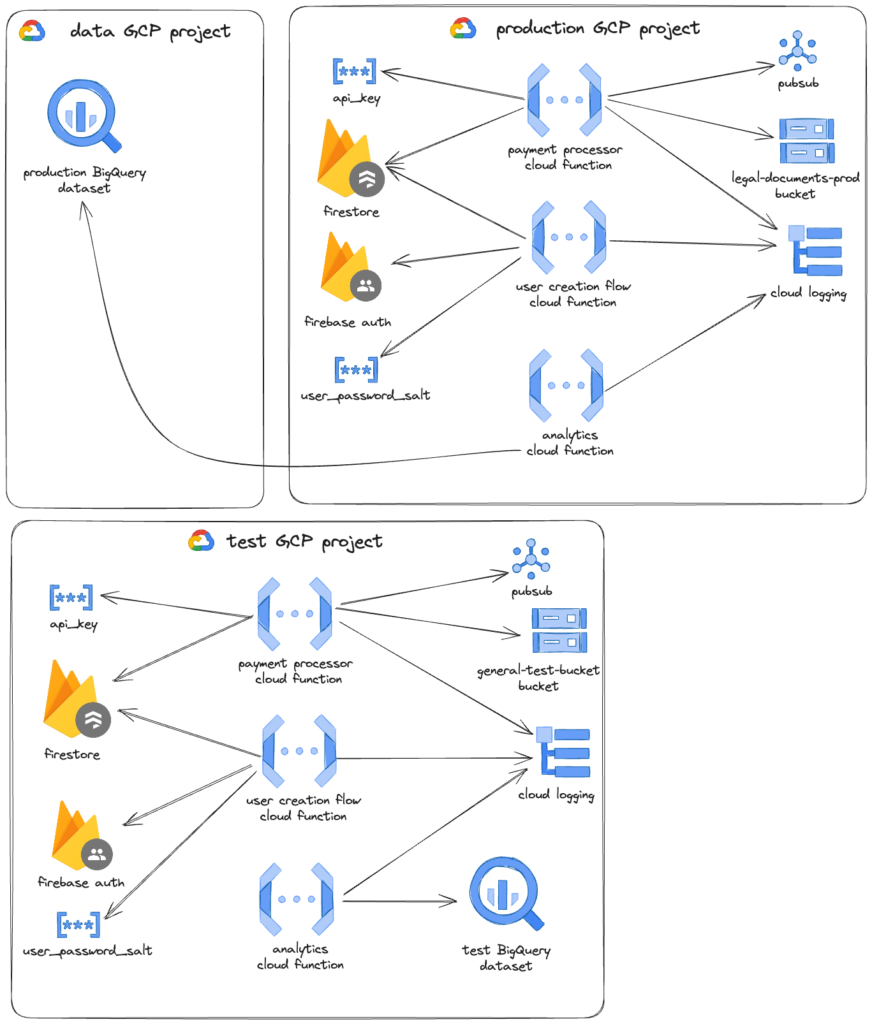

We have a system that’s based on Google Cloud Platform. Some of the logic is built using Cloud Functions and Firebase. We use Terraform for managing our infrastructure. To improve the security of the system, we wanted to create dedicated service accounts for specific functions so that they only have access to the resources they need.

For the sake of this article let’s assume we have 3 cloud functions:

- payment processor

- needs to publish to PubSub,

- can publish logs to the log explorer,

- has access to the Cloud Datastore (Firestore),

- has access to a specific Cloud storage bucket to upload legal documents,

- has access to

api_keysecret.

- user creation flow

- has access to Firebase admin,

- has access to the same Cloud Datastore (Firestore) database as the payment processor

- has access to

user_password_saltsecret

- analytics

- for production needs to run BigQuery jobs but against a dataset in a different project,

- for the test project, it uses the dataset from the same project.

We also have 3 GCP projects: production, test, and data. The Cloud Functions run only in production and test.

Here’s a visual representation of the system described above:

Terraform

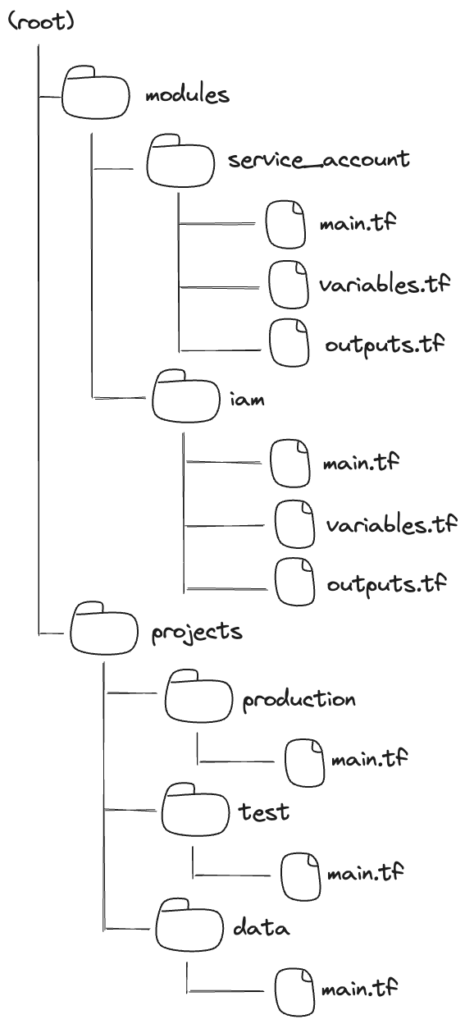

Terraform allows you to define reusable modules so that we can abstract away certain things. We have two main directories: modules and projects. We also have an Atlantis configuration file (atlantis.yaml) that let us define workflows so that we can plan or apply changes for specific projects.

My idea was to define two modules:

- service_account – which I would use to define a single service account

- iam – which I would use to define service accounts for the cloud functions, and later import it for each of the projects with proper parameters.

Service account module

I decided to use the following attributes for my service_account module.

sa_id– an ID of the service account. That can be used later when referencing a service account with a data source. It has to be between 6 to 30 characters (source).sa_name– a human-friendly name of the service accountroles– a list of roles to apply to the service accountrestricted_roles– a map to define conditions to certain roles – this way we can for example apply access to only specific resourcessecrets– which secret manager’s keys should the function should have access to.

Here’s how the service account module could look like:

resource "google_service_account" "sa" {

project = var.project

account_id = var.sa_id

display_name = var.sa_name

}

resource "google_project_iam_member" "sa_iam" {

for_each = toset(var.roles)

project = var.project

role = each.value

member = google_service_account.sa.member

}

resource "google_project_iam_member" "sa_iam_condition" {

for_each = var.restricted_roles

project = var.project

role = each.key

member = google_service_account.sa.member

condition {

description = each.value.description

expression = each.value.expression

title = each.value.title

}

}

resource "google_secret_manager_secret_iam_member" "secret_member" {

for_each = toset(var.secrets)

project = var.project_number

secret_id = each.value

role = "roles/secretmanager.secretAccessor"

member = google_service_account.sa.member

}

Note, that restricted roles are something that could be approached differently. In this example, we define the IAM member on the project level. But we can also define it on the specific resource level – as we do with the secret manager. However, not all resources have a possibility to apply the roles on a resource level – e.g. Cloud Datastore.

One thing to pay attention to is that for google_secret_manager_secret_iam_member we use a project number, not a project ID. This may be misleading and easily overlooked, but that’s how Google defines resource names for the secrets.

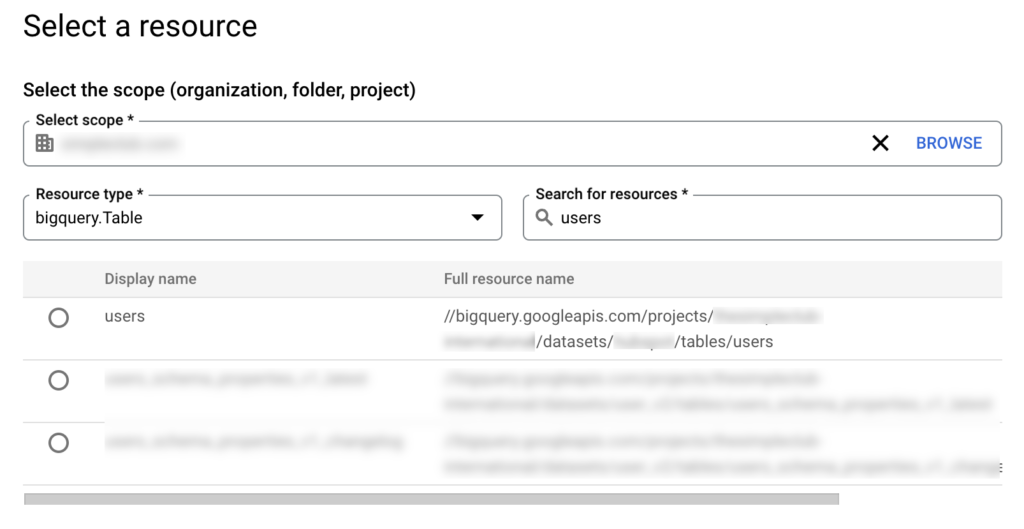

💡 hint: You can also use Policy Troubleshooter, or Policy Analyzer to see full resource names of specific resources you want to reference.

You can see that we use var.* notation here. These parameters are defined by us in the variables.tf file.

variable "project" {

description = "The project where to deploy the infrastructure to."

type = string

}

variable "sa_id" {

description = "Service account ID - unique within the project."

type = string

}

variable "sa_name" {

description = "The display name of the service account"

type = string

}

variable "roles" {

description = "Roles to apply to the service account"

type = list(string)

default = []

}

variable "restricted_roles" {

description = "Roles to apply to the service account that include condition. Do not use it for secrets - they use `secrets` variable"

type = map(object({

title = string

description = string

expression = string

}))

default = {}

}

variable "secrets" {

description = "A list of secret ids that the service account should have access to"

type = list(string)

default = []

}

variable "project_number" {

description = "The GCP project number"

type = number

}

We can also define what the module exports. We can use outputs.tf for that. We can define it like so:

output "email" {

value = google_service_account.sa.email

description = "The e-mail address of the service account."

}

output "name" {

value = google_service_account.sa.name

description = "The fully-qualified name of the service account."

}

output "unique_id" {

value = google_service_account.sa.unique_id

description = "The unique id of the service account."

}

This allows us later reference the service accounts that we created.

IAM module

In the main.tf we can define the modules for them:

locals {

default_database_condition = {

description = "Access to the default database"

title = "Default database"

expression = "resource.type == \"firestore.googleapis.com/Database\" && resource.name == \"projects/${var.project}/databases/(default)\""

}

}

module "payment_processor_sa" {

source = "../service_account"

sa_id = "payment-processor"

roles = [

"roles/logging.logWriter",

"roles/pubsub.publisher"

]

restricted_roles = {

"roles/datastore.user" : local.default_database_condition,

"roles/storage.objectUser" : {

description = "Storage bucket where legal documents are stored"

title = "Legal documents bucket"

expression = "resource.type == \"storage.googleapis.com/Object\" && resource.name.startsWith(\"projects/_/buckets/${var.legal_documents_bucket}\")"

}

}

secrets = [

"api_key"

]

}

module "user_creation_flow_sa" {

source = "../service_account"

sa_id = "user-creation"

roles = [

"roles/logging.logWriter",

"roles/firebaseauth.admin"

]

restricted_roles = {

"roles/datastore.user" : local.default_database_condition,

}

secrets = [

"user_password_salt"

]

}

module "analytics_sa" {

source = "../service_account"

sa_id = "analytics"

roles = [

"roles/logging.logWriter",

"roles/bigquery.jobUser"

]

}

Because we want to use the same condition for the datastore twice, we extracted it to the local variable using locals syntax.

Now, here we have two challenges:

- we want to use different Cloud Storage buckets for different projects

- we want to access the BigQuery dataset from a different project

So let’s first define the parameters for our IAM module:

variable "project" {

description = "The project where to deploy the infrastructure to."

type = string

}

variable "project_number" {

description = "The GCP project number."

type = number

}

variable "legal_documents_bucket" {

description = "Cloud storage bucket where we store legal documents"

type = string

}

And because there is a distinction of projects used for BigQuery data sets we may export the service account email (alternatively we can reference it using data source)

module "production_iam" {

source = "../modules/iam"

project = "prod_project_id"

project_number = 9900999000

legal_documents_bucket = "legal-documents-prod"

}

Now, having defined the common configuration for the project, let’s import it into the actual projects

Projects

To recap – we have 3 GCP projects: production, test, and data. The Cloud Functions run only in production and test.

In production let’s import it like this:

module "production_iam" {

source = "../modules/iam"

project = "prod_project_id"

project_number = 9900999000

legal_documents_bucket = "legal-documents-prod"

}

Because for the test we want to apply the BigQuery access, we can use the exported value:

module "test_iam" {

source = "../modules/iam"

project = "test_project_id"

project_number = 55555555

legal_documents_bucket = "general-test-bucket"

}

resource "google_bigquery_dataset_iam_member" "test_analytics_bq" {

dataset_id = data.google_bigquery_dataset.test_dataset.dataset_id

role = "roles/bigquery.dataEditor"

member = "serviceAccount:${module.test_iam.analytics_sa_email}"

}

data "google_bigquery_dataset" "test_dataset" {

dataset_id = "test-dataset"

project = "test_project_id"

}

And for the data project, since we don’t import the IAM module, we can’t use the exported service account ID. For that, we can use the data source:

data "google_service_account" "analytics_prod_sa" {

account_id = "analytics"

project = "data_project"

}

data "google_bigquery_dataset" "prod_dataset" {

dataset_id = "prod-dataset"

project = "data_project"

}

resource "google_bigquery_dataset_iam_member" "prod_analytics_bq" {

dataset_id = data.google_bigquery_dataset.prod_dataset.dataset_id

role = "roles/bigquery.dataEditor"

member = "serviceAccount:${data.google_service_account.analytics_prod_sa.email}"

}

That concludes the Terraform part. Below you can find a visual representation of the file structure:

Cloud functions

In the cloud functions, we use a Firebase functions wrapper. Therefore we can define the function like this:

import * as functions from 'firebase-functions';

import handler from './businessLogic';

export const paymentProcessorFunction = functions.runWith({

serviceAccount: 'payment-processor@'

}).https.onRequest(handler)We can use the special syntax which will append the project to the service account. This way we don’t have to parametrize to be able to define the functions in different projects.

Alternatively, we could also define the cloud function in the terraform and use the google_cloudfunctions_function resource together with the service_account_email attribute. This is not how we do it at the moment, but it would play out nicely with the exported outputs of our modules.

Summary

I hope this makes it easier to understand how to define service accounts for cloud functions using Terraform that use multiple GCP services. In this article:

- I defined a reusable module for defining a single service account

- especially I showed an example of how to use

google_project_iam_member,google_secret_manager_secret_iam_member,google_service_accountresources in Terraform

- especially I showed an example of how to use

- I defined a module for the IAM configuration of the whole project

- I used these modules for specific projects adding missing permissions to match specific criteria.

- I configured the service account to be used by the cloud function

References

- https://registry.terraform.io/providers/hashicorp/google/latest

- https://developer.hashicorp.com/terraform/language/modules

- https://github.com/runatlantis/atlantis

- https://developer.hashicorp.com/terraform/language/data-sources

- https://cloud.google.com/iam/docs/service-accounts-create

- https://cloud.google.com/iam/docs/conditions-overview

- https://cloud.google.com/secret-manager/docs

- https://cloud.google.com/iam/docs/full-resource-names

- https://cloud.google.com/policy-intelligence/docs/policy-analyzer-overview

- https://cloud.google.com/policy-intelligence/docs/troubleshoot-access

- https://developer.hashicorp.com/terraform/language/values/locals

- https://registry.terraform.io/providers/hashicorp/google/latest/docs/resources/cloudfunctions_function